The Headlines Say “Loathe,” The Data Says “Wary”

Pew Research released two major studies on AI recently, one tracking American sentiment and the other scanning the global landscape. The headlines are stark. In the US, 53% of adults believe AI will worsen human creativity. Half say it will deteriorate our ability to form meaningful relationships. Only 5% think it will improve them. Globally, across 25 countries, not a single nation has a majority of adults who are “more excited than concerned.” The most concerned populations are in the US, Italy, Australia, and Brazil.

Meanwhile, some media outlets are running headlines screaming that “Americans Loathe AI”. The media narrative is one of total rejection. A cultural death knell. But that is a superficial reading. It mistakes the noise for the signal. This is not a rejection of AI. It is the sound of adoption hitting a severe growth spasm. And frankly, we should have expected it.

The Noise of Hype vs. The Signal of Utility

The data reveals a specific, nuanced frustration that the “loathe” headlines miss. It is not that people hate the technology. They hate the rollout.

Consider the “shallow familiarity” paradox. A study cited in the reporting found that AI’s biggest fans tend to have the shallowest familiarity with the tech. The people using it most deeply, the engineers, the power users, are often the most cautious. The general public, flooded with hype and bad implementations, is reacting to the marketing, not the technology. They see AI jammed into every app where it serves no purpose. They see the “wild proliferation” of models that hallucinate facts and generate generic slop. They see the billions in investment demanding returns, and that pressure is distorting the product. People trust Microsoft. They tolerate Google. They once admired Musk for the Tesla. But the aggressive, top-down imposition of AI is generating genuine resistance.

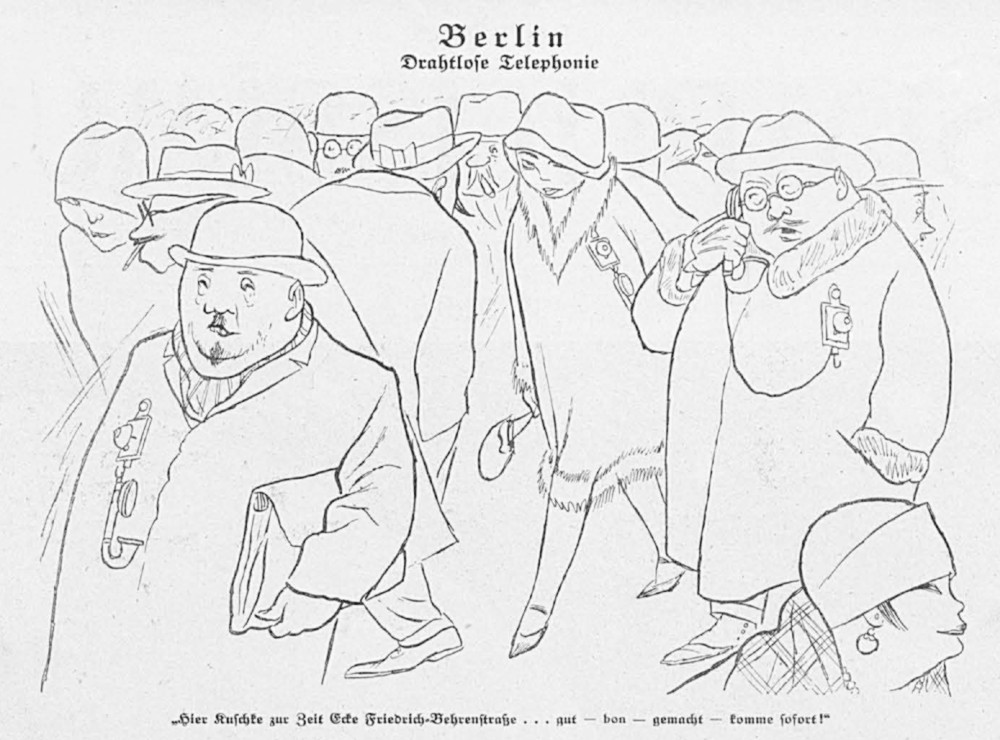

The Primal Reflex: When History Repeats Itself

Then there is the primal fear of change. The train, electricity, the punch card, the mobile phone, the internet. When humans cannot immediately visualize the upside, we amplify the downside. We blame AI for making students lazy instead of redesigning education. We fear it drives suicide instead of building better mental health tools. We point to hallucinations while ignoring how that infamous uncle lies at Christmas dinners too. We claim it destroys art, forgetting that the camera and synthesizer once supposedly threatened painters and musicians. We worry it steals jobs, but are ignoring it creates a lot of new ones too. (The fear is valid now, regardless of the long-term outcome.) The breakneck speed of this rollout forces us to close the skills gap, and that is where the real work begins.

The Demand for Labels, Not Bans

The Pew data also highlights a critical demand for transparency. 76% of respondents say it is “extremely or very important” to be able to tell if content is made by AI, yet 53% lack the confidence to do so. This a demand for a label, not rejection. Just as we require ingredient lists on food or nutrition facts on packaging, the public wants to know what they are consuming. The 80% who believe presenting AI content as human-made should be illegal are not asking for a ban; they are asking for honesty.

The Trust Deficit: A Crisis of Governance, Not Code

These reflexes are human. They are a necessary check to ensure we manage AI wisely over the long term. The skepticism is less about the tool and more about the industry. The absence of coherent policy and regulation is a crisis of trust, not a rejection of the technology itself.

The fact that most people cannot distinguish a human voice from a synthetic one proves how advanced the tech has become. (Amazing!) That carries risk, yes. And it demands rules. Just like traffic laws. Or a monopoly on violence. Not everyone gets to fire a gun.

The US is losing its moral authority on AI, and the world is noticing. When the originator of the technology cannot be trusted to govern it, the global community looks elsewhere. This is not a victory for authoritarianism; it is a failure of democratic oversight. The gap between what we promise and what we deliver has become a canyon.

Slowing Down to Speed Up

We need to slow down. Not to stop, but to steer. The rush to integrate AI everywhere has left us with a tool that is powerful but directionless. We are trying to drive a Formula 1 car on a dirt road with no brakes, and then acting surprised when people are scared to get in the passenger seat. The solution is not to smash the car. It is to build the track.

The Path Forward

We must prioritize human agency over algorithmic speed. Slowing down allows us to ask the hard questions: Who benefits? Who loses? Where are the safeguards?

If you are a leader, stop forcing adoption metrics and start building trust frameworks. If you are a worker, stop fearing replacement and start mastering the craft of human-AI collaboration. The future does not belong to the machines. It belongs to the humans who know how to steer them.

Let’s hit the brakes, look at the road, and decide where we are actually going.